Performance Testing: A Comprehensive Guide

The speed of software applications is important for defining user experiences and driving organizational success. Performance testing is an essential strategy for ensuring applications are fast, efficient, and scalable. It identifies bottlenecks and ensures software fulfills end-user expectations by rigorously analyzing system speed, stability, and scalability.

This article goes into the complexities of performance testing, covering the numerous types that are required for optimizing software across diverse digital landscapes. By eventually guaranteeing that applications run smoothly under a variety of settings and workloads.

- What is Performance Testing?

- Why Performance Automation Testing?

- Types of Performance Testing

- Performance Testing Metrics

- Performance Testing Advantages

- Common Problems in Performance Testing

- How To Do Performance Testing?

- Example: Online Booking System

- Best Practices of Performance Testing?

- Conclusion

What is performance testing?

Performance testing ensures software applications perform under a specific workload while evaluating their speed, scalability, and stability. This testing approach aims to make the application work at its best and fulfill performance criteria. It is an essential step for validation before the application is released as it confirms its credentials of meeting the real-world use requirements.

The visibility into application performance identifies any errors that can disrupt the application’s functionality.

Key Objectives of Performance Testing

Speed: Determines how quickly the application responds to user requests. Speed is a critical aspect of user satisfaction and can greatly influence the perceived quality of the application.

Scalability: Assesses the application’s capacity to handle increased loads without impacting performance. Scalability testing helps identify the maximum user load the application can support before its performance deteriorates.

Stability: Measures the application's performance over time and under varying load conditions. Stability ensures the application remains functional and performs consistently, even under stressful or peak conditions.

Why Performance Automation Testing?

The primary goal of integrating automation in performance testing is to enhance efficiency while expediting the process. However, there is more to it:

- Performance testing involves many users in complex scenarios involving repetitive tasks and are prone to manual mistakes. By introducing automation, testers can enable error detection, scalability problems, and performance regressions.

- Automation maintains consistency in repeated tasks where manual testing can induce biased factors. It adheres uniformly and replicably to predetermined scripts and configurations.

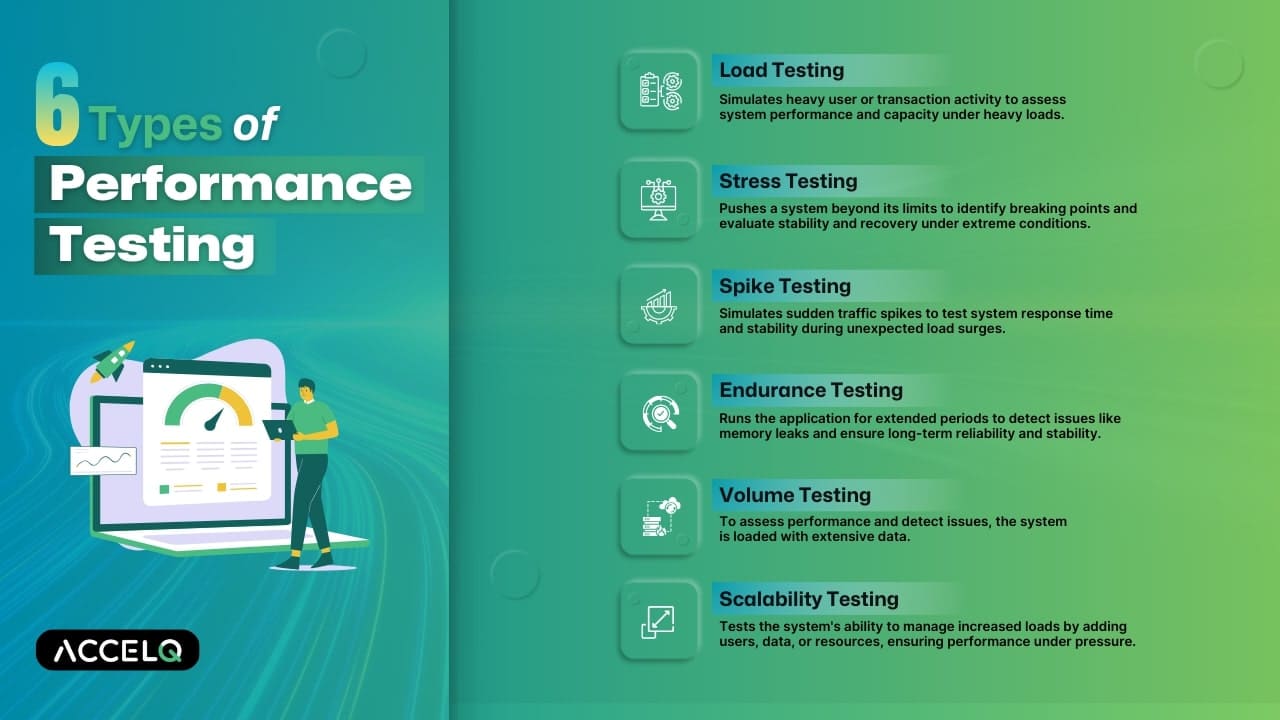

Types of performance testing

Load testing

Load testing is an essential performance test that simulates heavy user or transaction activity to stress a system. It assesses system performance under heavy loads. This testing determines the system's maximum operational capacity, performance constraints, and ability to handle projected traffic without compromising service quality.

Stress testing

Stress testing, often known as fatigue testing, is a performance test that stresses a system beyond its operational limits. It determines a system's breaking point and stability under stress. It exposes the system to significant user or transaction loads to find software bugs and assess its recovery ability.

Spike testing

Spike testing simulates unexpected traffic spikes in user requests or transactions to test a system's ability to handle them. The system's performance will be tested under quick, strong loads above operational levels. The main focus is on the system's response time and stability during spikes.

Endurance testing

Endurance testing is necessary to find persistent issues like memory leaks, which can cause system failure or lower performance. It entails prolonged use to ensure the application can perform for longer periods without deteriorating. This testing ensures ongoing application reliability and stability.

Volume testing

Volume testing evaluates a system's ability to handle large amounts of data. This testing loads the system with data to assess its performance and identify issues with processing and controlling massive data sets.

Scalability testing

Scalability testing evaluates a software system's ability to manage heavy loads. This can be done by adding users, data, or CPUs and memory. It analyzes the application's capacity to maintain or improve performance under pressure.

Performance Testing Metrics

- Response Time: The total time taken to respond to a request.

- Throughput: The number of transactions handled per second

- Error Rate: The percentage of errors encountered during the tests.

- Concurrent Users: The number of users accessing the system simultaneously.

- CPU and Memory Utilization: The amount of CPU and memory resources used during the test.

Performance Testing Advantages

- Identifies Bottlenecks: Helps in pinpointing areas where performance issues occur.

- Improves Scalability: Determines if the system can handle increased loads.

- Enhances User Experience: Ensures the application responds quickly and remains stable under various conditions.

- Reduces Risks: Identifies potential issues before the system goes live, reducing the risk of failure.

- Informs Decision Making: Provides data-driven insights for capacity planning and resource allocation.

Common Problems in Performance Testing

- Test Environment Differences: Discrepancies between the test and production environments can lead to inaccurate results.

- Improper Planning: The test might not represent actual usage without realistic scenarios.

- Resource Intensiveness: Performance testing can require significant computational resources.

- Complex Analysis: Interpreting the results can be complicated, especially when dealing with large amounts of data.

- Tool Limitations: The chosen tools might not support all aspects of the application or system being tested.

How To Do Performance Testing?

Performance testing involves various steps, as explained in the previous section. It begins with creating tests to stimulate loads on a system and ends with analyzing the results.

Identify the Testing Environment

Understand the hardware, software, and network configurations.

Determine Performance Metrics

Decide on the criteria like response time, throughput, and resource utilization.

Plan and Design Tests

Create realistic user scenarios and prepare test data.

Configure the Test Environment

Set up the necessary tools and resources.

Implement Test Design

Develop and script the tests based on the planned scenarios.

Execute the Tests

Run the tests while monitoring the system's performance.

Analyze and Retest

Analyze the results to identify bottlenecks and retest after making improvements.

Example: Online Booking System

Scenario:

A travel company wants to ensure that its online booking system can handle a surge in users during a promotional campaign for holiday packages.

Objective:

To verify that the website remains fast and responsive under high traffic conditions.Performance Testing Conducted:

1. Load Testing

- Process: Simulate multiple users logging on to the website simultaneously to search for and book travel packages.

- Goal: To ensure the system can handle the expected number of users without performance degradation.

2. Spike Testing

- Process: Suddenly the number of users is suddenly increased far above normal levels for brief periods and then dropped back to normal levels.

- Goal: To check if the system can handle sudden spikes in traffic, mimicking real-world scenarios like the launch of a new discount offer.

Outcome:

The tests help identify performance issues, ensuring the booking system is robust and user-friendly during peak traffic times.

Best Practices of Performance Testing

Given the importance of performance testing in the software testing ecosystem, the following must be noted:

Early in development:

Before finishing development, performance testing should be done. Shifting performance testing left means testing sooner in development. This method helps identify issues early and fix them quickly, saving time and money.

Avoid assumptions:

Assuming a restricted set of performance testing results will remain constant despite changes is a recipe for disaster. Perform performance testing to verify these assumptions.

Test Coverage:

One performance testing scenario is insufficient. Comprehensive performance tests must include concerns beyond well-specified situations. AI-powered no-code performance testing improves scenario, test coverage and widens testing.

Strategy:

Starting with a lighter workload and gradually increasing it produces optimal results that are easier to diagnose and fix.

Bring awareness

Isolating functions for performance testing is essential, but component test results do not affect system evaluation. Therefore, it is vital to raise awareness of untested elements and maximize resource use to cover a wide spectrum of functions.

Choose the right test automation tool:

For optimal performance test results, the test automation platform must use the product as real users would. Changes in performance test settings must be considered.

Conclusion

Automating performance testing expedites testing while ensuring a big testing footprint and coverage. The emphasis on performance testing guarantees that the application operates as intended and provides a good user experience. Because performance influences user acceptance and advocacy, implementing an effective performance testing approach is one of the pillars of producing great software. Connect with our ACCELQ team to get your personalized performance testing strategy.

Suma Ganji

Senior Content Writer

Expertly navigating technical and UX writing, she crafts captivating content that hits the mark every time. With a keen SEO understanding, her work consistently resonates with readers while securing prime online visibility. When the day's work ends, you'll find her immersed in literary escapades in her quaint book house.

Discover More

Accessibility Testing with ACCELQ

Accessibility Testing with ACCELQ

Accessibility Testing with ACCELQ

Test Case vs Test Scenario: Know the Key Differences

Test Case vs Test Scenario: Know the Key Differences